Weekly Report [2]

Weekly Report [2]

Jinning, 07/25/2018

[Newest Research Proposal]

[Project Github]

Determined Research Topic

My research topic is Criteo Ad Placement. The Criteo dataset has the format: \((c, a, \delta, p)\). This format is the same as BanditNet.

- \(c\) contextual feature

- \(a\) action from Criteo policy

- \(\delta\) loss (click or not)

- \(p\) propensity. \(p(slot1=p)=\frac{f_p}{\sum_{p'\in P_c}f_{p'}}\)

My task: building model \(h:c\times a \rightarrow \delta\). In another word, calculate the score of every candidate ad, then derive \(\hat{\pi}\). For example, \(\hat{\pi}=\arg \max_{a\in A}h(c, a)\).

I should try to combine propensity infomation to reduce the bias. For example, we can let \(\hat{h}=\arg \min_w\sum\frac{\hat{\pi}(c, a)}{p_i}(w\phi (c, a)-\delta)\).

Paper Reading

Large-scale Validation of Counterfactual Learning Methods: A Test-Bed

@article{lefortier2016large, title={Large-scale validation of counterfactual learning methods: A test-bed}, author={Lefortier, Damien and Swaminathan, Adith and Gu, Xiaotao and Joachims, Thorsten and de Rijke, Maarten}, journal={arXiv preprint arXiv:1612.00367}, year={2016} }

This paper introduces the criteo dataset’s data format, evaluation metric(IPS) and baselines.

Example Data format:

example 32343877: 57702caea2a35f22a32c43a4b57e2a3057702caf1993e0165eb115f689a5b0bb 0 1.102191e-01 2 17 1:300 2:250 3:0 4:16 5:2 6:1 7:21 8:79 9:31 10:2

0 exid:32343877 11:1 12:0 13:0 14:0 15:0 16:2 23:20 24:95 25:40 32:26 35:61

0 exid:32343877 11:0 12:1 13:0 14:1 15:0 17:2 21:10 22:80 24:103 25:43 33:29 35:65

0 exid:32343877 11:0 12:1 13:0 14:1 15:0 17:2 21:10 22:54 24:103 25:43 33:29 35:65

0 exid:32343877 11:0 12:1 13:0 14:1 15:0 17:2 21:10 22:63 24:103 25:43 33:29 35:65

...This dataset is multi-slot. In order to simplify the task, I will use the preprocessed CrowdAI dataset. CrowdAI dataset is one slot based. The size of dataset is still quite large.

Metric

IPS metric: \[ \hat{R}(\pi)=\sum_{i=1}^{N}\delta_i\frac{\pi(y_i|x_i)}{q_i} \]

Experiment

Try the code of FTRL

Try the code using Online Learning model FTRL, which is the rank1 in NIPS ’17 Workshop: Criteo Ad Placement Challenge His Github. I reproduce his result: IPS=55.725.

Write my own code of LR

I write my own code of Logistic Regression (MLP) with Pytorch (GPU). Newest Project Codes can be found here: Project Github. This is a well developed frame. It is easy to be extended to other models like FM and deep learning.

My first logistic regression model receives an one-hot input of size Batch * Dim of a candidate Ad. Dim is the dimension of features. Then go through a linear layer of dimension Dim with ReLU activation, then a linear layer of dimension 4096. A sigmoid function is followed. Binary Cross Entropy (BCE) loss is applied.

Result

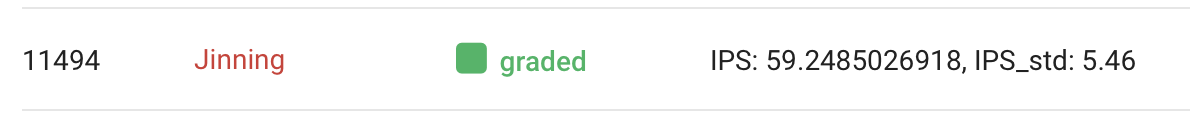

The result really surprise me ! I got an IPS=59.249. Which is much higher than the Rank1 player in CrowdAI challenge! And I only train for \(2\) epoch. Even ensemble is not applied.

I feel regretful that I didn’t attend this challenge last year. Or I should have earned an award of 2000 dollars

Other methods and applying of propensity are to be completed.

Usage of my code

Get the code:

git clone https://github.com/jinningli/ad-placement-pytorch.gitRequirements:

CUDA

pytorch

scipy

numpy

crowdaiBuild dataset

Download from https://www.crowdai.org/challenges/nips-17-workshop-criteo-ad-placement-challenge/dataset_files

gunzip criteo_train.txt.gz

gunzip criteo_test_release.txt.gz

mkdir datasets/crowdai

mv criteo_train.txt datasets/crowdai/criteo_train.txt

mv criteo_test_release.txt datasets/crowdai/test.txt

cd datasets/crowdai

python3 getFirstLine.py --data criteo_train.txt --result train.txtTrain

python3 train.py --dataroot datasets/criteo --name Saved_Name --batchSize 5000 --gpu 0 --no_cacheTest

python3 test.py --dataroot datasets/criteo --name Saved_Name --batchSize 5000 --gpu 0 --no_cacheCheckpoints and Test result will be saved in checkpoints/Saved_Name.

All options

--display_freq Frequency of showing train/test results on screen.

--continue_train Continue training.

--lr_policy Learning rate policy: same|lambda|step|plateau.

--epoch How many epochs.

--save_epoch_freq Epoch frequency of saving model.

--gpu Which gpu device, -1 for CPU.

--dataroot Dataroot path.

--checkpoints_dir Models are saved here.

--name Name for saved directory.

--batchSize Batch size.

--lr Learning rate.

--which_epoch Which epoch to load? default is the latest.

--no_cache Don’t save processed dataset for faster loading next time.

--random Randomize (Shuffle) input data.

--nThreads Number of threads for loading data.